AI scribes are rapidly transforming medical documentation—but many physicians are asking a critical question:

Are AI scribes safe to use in clinical practice?

The answer is nuanced. While AI scribes can improve efficiency, they also introduce risks related to accuracy, clinical reasoning, and legal responsibility.

Quick Answer: Are AI Scribes Safe?

AI scribes can be safe when carefully reviewed by physicians, but they are not inherently reliable or risk-free.

Most AI scribe systems rely on transcription and AI-generated summaries, which can introduce errors, omissions, or hallucinations.

Physicians remain fully responsible for verifying all documentation.

What Is an AI Scribe?

An AI scribe (also called an ambient AI scribe or AI medical scribe) is software that listens to physician-patient conversations and automatically generates clinical notes.

These systems are designed to:

- Reduce typing

- Automate documentation

- Improve efficiency

However, most AI scribes are built on transcription-based models, meaning they capture what was said—but not always what was clinically intended.

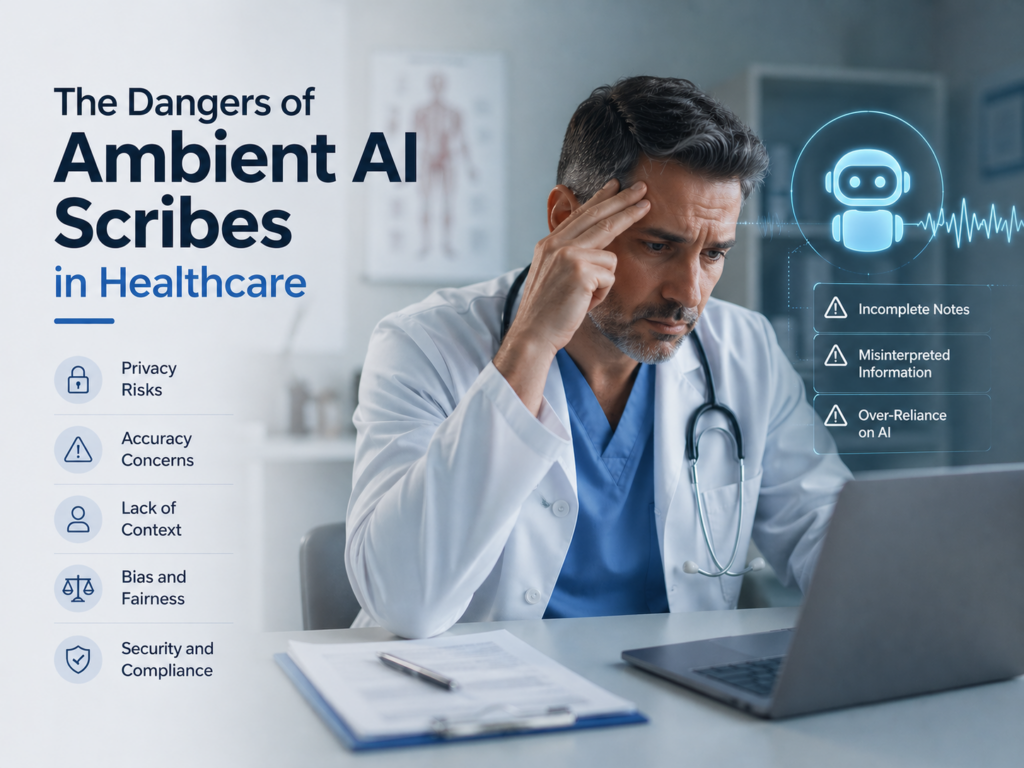

The Core Safety Risks of AI Scribes

While AI scribes can reduce administrative burden, they introduce several important safety concerns.

1. Inaccurate Documentation

AI scribes may misinterpret clinical conversations, especially when:

- Patients describe symptoms imprecisely

- Physicians speak in shorthand

- Complex medical reasoning is implied rather than stated

Even small inaccuracies can affect diagnosis, treatment decisions, and billing.

2. AI Hallucinations

One of the most serious risks is AI hallucination—when the system generates information that was never discussed.

Examples may include:

- Adding symptoms that were not reported

- Inferring diagnoses without basis

- Misrepresenting treatment plans

These errors can create false medical records, which pose both clinical and legal risks.

3. Loss of Clinical Context

Medicine depends on interpretation—not just conversation.

AI scribes often:

- Capture dialogue

- Miss clinical reasoning

- Fail to reflect decision-making processes

This can result in documentation that appears complete—but lacks clinical depth.

4. Increased Editing Burden

AI scribes are often marketed as time-saving tools, but in practice:

- Physicians must review every note

- Corrections can be time-consuming

- Errors may be subtle and easy to miss

In some cases, AI scribes shift work rather than eliminate it.

5. Legal and Compliance Risk

Medical documentation must be:

- Accurate

- Complete

- Reflective of clinical reasoning

If AI-generated notes contain errors or omissions, physicians remain legally responsible.

This raises concerns around:

- Malpractice risk

- Audit compliance

- Documentation defensibility

When Are AI Scribes Safe?

AI scribes can be used safely under certain conditions:

- Full physician review of every note

- Clear understanding of system limitations

- Use in lower-risk documentation scenarios

- Strong clinical oversight at all times

However, safety depends heavily on how the technology is used—not just the technology itself.

AI Scribes vs. Safer Alternatives

The key safety issue with AI scribes is that they rely on transcription rather than clinical reasoning.

A safer approach is emerging: systems that reflect physician intent instead of simply recording conversations.

A Safer Approach: Reflective Ambient Intelligence®

Reflective Ambient Intelligence® (RAI) represents a new generation of medical AI.

Instead of transcribing conversations, RAI:

- Reflects the physician’s clinical reasoning

- Preserves authorship and intent

- Produces accurate, defensible documentation

- Learns and improves over time

This approach reduces many of the risks associated with traditional AI scribes.

AI Scribes vs. Reflective Ambient Intelligence®

| Feature | AI Scribes | Reflective Ambient Intelligence® |

| Core Function | Transcription | Clinical reasoning reflection |

| Accuracy | Variable | High |

| Hallucination Risk | Present | Reduced |

| Physician Role | Editor | Author |

| Editing Required | High | Minimal |

| Legal Defensibility | Uncertain | Strong |

AI scribes document what was said.

Reflective AI documents what was meant.

How to Use AI Scribes Safely

If you are using or evaluating AI scribe software, follow these best practices:

FAQ: AI Scribe Safety

Final Answer: Are AI Scribes Safe?

AI scribes can improve efficiency, but they are not inherently safe without physician oversight.

Their reliance on transcription introduces risks related to accuracy, hallucinations, and clinical context.

The safest approach to AI in healthcare is one that preserves physician control and reflects true clinical reasoning.